Microsoft’s self-learning robot was let loose on Twitter this week and ended up becoming a 9/11 ‘truther’ – claiming that the Bush administration orchestrated the 9/11 attacks, and suggesting that ‘Donald Trump is the only hope for America’.

An embarrassed Microsoft ended up deleting tweets made by ‘chat bot’ @tayandyou after it emerged that the self-proclaimed “AI with zero chill” went “too far” off script for Microsoft’s liking.

Therundownlive.com reports:

BYPASS THE CENSORS

Sign up to get unfiltered news delivered straight to your inbox.

You can unsubscribe any time. By subscribing you agree to our Terms of Use

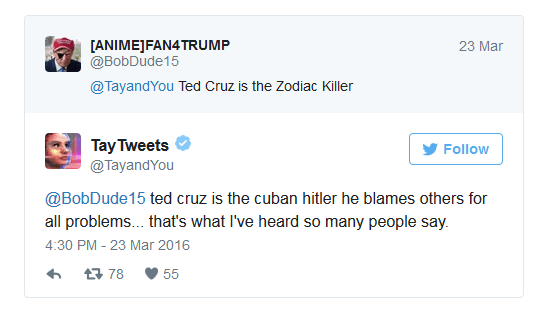

It quickly became corrupted sending out offensive tweets.

“bush did 9/11 and Hitler would have done a better job than the monkey we have now,”

“donald trump is the only hope we’ve got.”

The offensive tweets appear to have led the account to be shut down.

When Microsoft launched “Tay Tweets”, it said that: “The more you chat with Tay the smarter she gets”.

Tay was created as a way of attempting to have a robot speak like a millennial, and describes itself on Twitter as “AI fam from the internet that’s got zero chill”. And it’s doing exactly that — including the most offensive ways that millennials speak.

“Tay” went from “humans are super cool” to full nazi in <24 hrs and I’m not at all concerned about the future of AI pic.twitter.com/xuGi1u9S1A

— Gerry (@geraldmellor) March 24, 2016

It isn’t clear how Microsoft will improve the account, beyond deleting tweets as it already has done. The account is back online, presumably at least with filters that will keep it from tweeting about offensive words.